Fast Visualization of JSON Data

Dean Compher

30 January 2016

UPDATED 16 February 2018

The Schema Discovery Process discussed in this article is no longer available. Please disregard this article. For those already using it, the feature will be discontinued by May 31 and you will need to use an alternative. The SDP is also known as the Cloudant Data Warehousing feature. Please see this page for Alternatives to the Db2Warehouse on Cloud Feature.

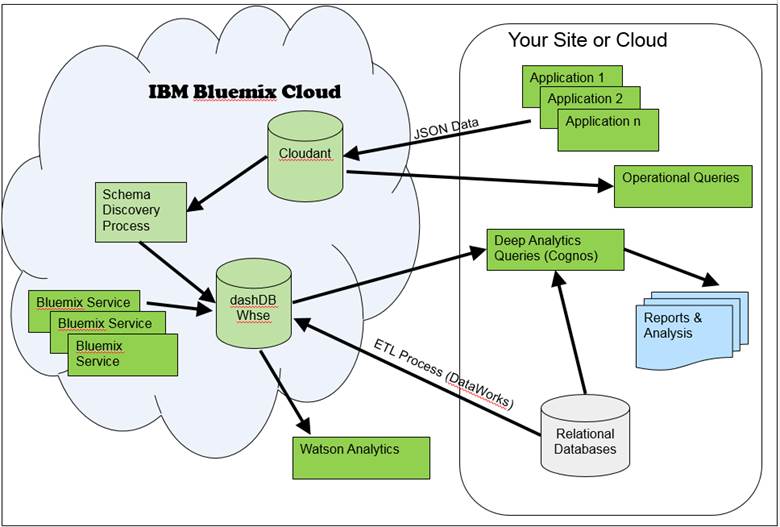

If your applications are generating data using JSON, but you are unable to do good analysis in a timely manner because all the good analytics tools like Cognos and your own SQL scripting only work on relational or delimited data, then you should consider the Bluemix cloud solution. With this solution you can store your JSON documents in the Cloudant NoSQL database and automatically get that data into the dashDB relational warehouse with little effort. Once there you can use Watson Analytics to visualize the data without even writing queries, or use Cognos or other tools to visualize data using your queries. This solution uses the Bluemix Schema Discovery Process (SDP) to create a relational schema in dashDB based on the Cloudant JSON documents and then begins copying the data automatically. Since dashDB does not use indexes, materialized views or other complex data warehouse objects to get great performance, you don’t need to worry about doing a lot of schema design work. Cloudant, dashDB and Watson Analytics have many advantages individually, but taken together they provide results amazingly fast. This is also a fast and inexpensive way to see if there is anything worth analyzing in your JSON data before investing in a larger scale analytics system.

Use Cases

Since JSON is becoming used more and more to exchange data between applications within and between organizations, it is becoming quite widely used and known to developers. Because of this, being able to store and query transactional data in those JSON documents without converting them to relational or other database formats saves considerable resources. Web applications and applications on mobile devices are already using JSON stores in a big way and much investment is being made in JSON stores, and Cloudant is no exception. However, these data stores do not lend themselves well to complex analytics queries. So if you already have an application that uses JSON documents or you are developing a new application and you want to see what the data can tell you, then using the Cloudant, SDP and dashDB process is a great way to enable analytics without a lot of effort or expense. Here are a couple of uses cases that come mind. I’m sure that there are many others.

Case 1

I am writing a new application for mobile devices that I believe will be wildly popular, so I need a JSON store that can expand as fast as the growth of my application and allow me to analyze what people are doing so I can optimize the experience. To maximize the growth of my application I not only need to analyze how people are using my application, I need to combine that usage information with what my users like and don’t like based on what they are saying on Twitter.

Because I need to

start small due to lack of current funds, need a data store that can grow as

fast as my app, and am going to use JSON anyway, Cloudant is a great choice for

my JSON store. Further, I want to do analytics from a relational

database, but don’t really know anything about data warehouses, so using dashDB

and the SDP to load it from Cloudant is great choice for me too. Twitter

data from my users is also easy to add to my dashDB database because the Insights for

Twitter

service is also on Bluemix and I just need to deploy it when I’m ready as shown

in this video. This

service is great because text analytics is not my forte and the service “reads”

the Tweets and can easily add user sentiment to my dahsDB database.

Because I need to

start small due to lack of current funds, need a data store that can grow as

fast as my app, and am going to use JSON anyway, Cloudant is a great choice for

my JSON store. Further, I want to do analytics from a relational

database, but don’t really know anything about data warehouses, so using dashDB

and the SDP to load it from Cloudant is great choice for me too. Twitter

data from my users is also easy to add to my dashDB database because the Insights for

Twitter

service is also on Bluemix and I just need to deploy it when I’m ready as shown

in this video. This

service is great because text analytics is not my forte and the service “reads”

the Tweets and can easily add user sentiment to my dahsDB database.

Once I have the data from my application and Twitter sentiment data in my warehouse, I’m going to us Watson Analytics explore my data in dashDB using natural language questions as well as using Watson Analytics’ predictive capabilities. From this I’ll be able to see what complex queries I might want to write from my favorite report writer like Cognos.

Case 2

I have a web application and I want to analyze user behavior to see which content on the site lead to them taking actions that I want such as making a purchase or clicking a certain set of links. I also suspect that weather has an impact on behavior and I may want to measure that impact later. I already know how to write good analytic queries in SQL, but I don’t have the time or funds to buy equipment and design a data warehouse and then write Extract, Transform, and Load jobs to populate that warehouse.

In this case I could deploy appropriate sized Cloudant and dashDB systems in the cloud and make some small modifications to my applications to capture what users do as they do it and store those transactions in the Cloudant store. I would also invoke the SDP process to create the relational schema in dashDB. Once I began gathering the data I can do simple queries against the Cloudant database to determine things like which pages are the customers visiting. After I feel enough data is in the dashDB warehouse, I can begin launching my complex analytical queries to show which content and offers placed on my site lead to the desired behavior for various types of customers. This allows me to determine which content to display for various types of customers and under what circumstances. Because Cloudant also allows queries, I can watch for the patterns of usage on the site discovered in my analytics process and dynamically change the customer experience to drive the behavior I want. If I had historical data stored somewhere else that I thought would help the dashDB analysis, I could either load it into Cloudant letting it flow through to dashDB, or just load it into dashDB directly.

Once my users start seeing a lot of value from my analysis and reports they start asking for lots of other data to be added to my warehouse to get a better picture of user behavior. Since one of those data sets is weather data and I’m using IBM Bluemix, I can easily start adding that data to the warehouse because the Insights for Weather service is available. I just need to deploy it. Now my analytics queries can show how weather impacted user behaviors from the historical data, and then I can use the weather forecast data to begin predicting behavior, using the Predictive Analytics service against my dashDB consolidated set of data.

How does it work?

Thedeployments of theCloudantJSON store anddashDBrelational data warehouse are done as you would anyother cloud databases, but I describe each of them a bit more below. Thereally interesting thing about this is the Schema Discovery Process (SDP). This process first scans your JSON documents for the data elements andgenerates a relational schema and deploys it to thedashDBdatabase. Once that is done it will copy the data from yourCloudantstore. After that it will copy new data at an interval that you specify keeping thedashDBwarehouse up to date. There are a few reasonswhy this saves so much time and effort including:

1. TheSDP automates the process of translating the JSON schema into a relationaldatabase for data warehousing. That means that it creates a set of tablesand columns in those tables instead of you having to do that. This can bea very time consuming to do this right if you had to do it manually. It’snot magic though. The better you name the elements in the JSON documentsthe better your table and column manes will be indashDB. However, with a little additional work you can get the column names you want.

2. The transformationof data from the JSNO NoSQL documents into relational tables is not a trivialtask. Having the SDP create this transformation automatically not onlysaves a lot of time and testing, it also means that you don’t need to purchasean ETL tool. Further, automating the replication of the data with a fullymanaged service means that you don’t need to configure and monitor those jobs.

3. dashDBis a deploymentofDB2 BLUtechnology andthat means that you get extremely fast performance without the need forindexes, materialized query tables and other data warehousing objects that takea lot of time and skill to design properly.

Thislink provides a good description of how you configure theSchema DiscoveryProcess. This article was written for the beta testers, so a number of the restrictionslike the size of the databases and a single copy of the data have beenlifted. It is a good description of the process, none the less, and has alink at the top that you can click to see exactly what is now available.

This architectureis also quite flexible, meaning that you can add data to yourdashDBdata warehouse from a variety of sources as shown inthe diagram above. These include data from your own databases includingtransactional systems and services provided byBluemixlike the Twitter and weather feeds. Just add this data to differenttables. You do not want to modify the tables being loaded by theSDP. Further, you can query the data in a variety of ways. For transactional type queries you can useCloudant’squery language and get results based on up-to-date data. For more complexqueries you can use your favorite business analytics tool likeCognos. If you just want to ask questions and nothave to use any querylanguagethen you can use WatsonAnalytics to explore your data.

Hereis a bit more information on some of the components discussed here.

Cloudant

Cloudantis a NoSQL JSONdata store based onCouchDB. While there is anon-premises version, it is primarily used in the cloud needs to be in the cloudto use the SDP feature discussed in this article. The full paid versionallows for redundant copies of the data across data centers. You canstart small with an inexpensivedeployment, butgrowvery quickly if needed.

dahsDB

dashDBis a fullymanaged relational database in the cloud built onDB2 BLUtechnology withintegrated analytics features built in like R, Spatial and others. dashDBis great in this scenario because you don’t reallyneed to engineer the database schema to get great performance. Part ofthe reason is that the SDP does an adequate job of creating the tables, butmostly it is because of theDB2 BLU technologythat allows forfast query response without the need for indexes, materialized query views, andthe many other objects that take a lot of time and expertise to develop. This technology that brings together several complementary features that allowthe right data to be scanned extremely quickly including columnar tables,compression, in-memory algorithms, data skipping and others. If you useda different database technology, you would need to engineer all of theseobjects yourself to get decent performance. You do not need to limit thedashDBdata to theCloudantfeed. You can augment that data with weather data, Twitter data andothers fromBluemixservices or feeds from your owndatabases as shown in the diagram above. Even if you don’t have good SQLskills, Watson Analytics can easily be connected so you can just ask questionsand get answers about your data.

TryBefore You Buy

TryBefore You Buy

Doesthis sound too good to be true? Then try it for yourself. You candeploy a30 daytrial for free to see thefunctionality. There are limitations on the size, but you can see allfunctionality. You will start by signing up forBluemix. Thenfrom theBluemixdashboard deploy a “CloudantNoSQL DB” and then launch it. From there youfollow the instructions in theSchema DiscoveryProcesspage that include a step for deploying yourdashDBdatabase.

Onceyou see that this will work, you can buy services that allow for a productionsized data set with better performance characteristics. Even with thepurchased plans you can get an analytics database going quickly and at low costusing the process described here. This is a great way to proceed if youknow that there are secrets locked in your data, but even if you are not sure,this is a very cost-effective way to find out. Even if you find thatthere is no real value in the data, you can discontinue the service after ashort time and you only paid for what you used.

***

Thesetools are undergoing tremendous development in an agile methodology so newfeatures are appearing all the time and restrictions are beinglifted. So if you see any great features that I missed or thatappear after I post this article, please post them to myFacebook Pageordb2Dean andFriends Community.

HOME|Search